Aurelia and Pusher Collection

In this post, we are going to look at using Pusher and building a real-time collection. Just like our post on Firebase Collection, we are going to cover all the database CRUD (create, retrieve, update, delete) capabilities.

Pusher is a powerful solution when it comes to building real-time feature in your applications. We are going to be looking at the Channels product. Let’s get started by looking at what it takes to build a real-time collection using the Pusher API in Aurelia.

In the following example, we are going to be making the assumption that you are storing your data in a database like MongoDB. This implies that you will have a unique key, _id. As you will see, we will use this in our PusherCollection implementation.

PusherService

The first code example we will look at will deal with configuring the Pusher API:

import 'pusher-js';

import environment from '../environment.js';

const {baseUrl, apiKey, cluster, forceTLS} = environment.pusherConfig;

export class PusherService {

constructor() {

// Enable pusher logging - don't include this in production

// Pusher.logToConsole = true;

this.pusher = new Pusher(apiKey, {

cluster: cluster,

forceTLS: forceTLS,

authEndpoint: `${baseUrl}pusher/auth`

});

}

subscribe(channelName, eventName, dataHandler) {

const channel = this.pusher.subscribe(channelName);

channel.bind(eventName, dataHandler);

}

unsubscribe(channelName) {

this.pusher.unsubscribe(channelName);

}

}

With this class, we are wrapping the Pusher object passing in configuration settings from our environment.js file. Basically, we are creating a new instance of the Pusher object. Notice, that if you want to use private or presence concepts from Pusher, that an authEndpoint is included.

The final pieces are the two functions, subscribe and unscubscribe. These functions simply allow us to subscribe to a given channel and event as well as passing along a handler that will react when the given channel and event fire. The unsubscribe simply unsubscribes from the Pusher object.

PusherCollection

Let’s move on to our PusherCollection object:

import {Container} from 'aurelia-dependency-injection';

import {PusherService} from '../services/pusher-service.js';

export class PusherCollection {

constructor(config = {dependencies: [], channel: '', initItems: [], callbacks: {}}) {

this.pusherSvc = Container.instance.get(PusherService);

const {channel, initItems = [], callbacks = {}} = config;

this.channel = channel;

this.items = initItems;

this.callbacks = callbacks;

this.listen();

if (this.callbacks['init']) {

this.callbacks['init']();

}

}

listen() {

this.pusherSvc.subscribe(this.channel, 'item-added', this.onItemAdded.bind(this));

this.pusherSvc.subscribe(this.channel, 'items-added', this.onItemsAdded.bind(this));

this.pusherSvc.subscribe(this.channel, 'item-removed', this.onItemRemoved.bind(this));

this.pusherSvc.subscribe(this.channel, 'items-removed', this.onItemsRemoved.bind(this));

this.pusherSvc.subscribe(this.channel, 'item-updated', this.onItemUpdated.bind(this));

this.pusherSvc.subscribe(this.channel, 'items-updated', this.onItemsUpdated.bind(this));

}

stopListening() {

this.pusherSvc.unsubscribe(this.channel);

this.pusherSvc = null;

}

async onItemAdded(data) {

this.items.push(data);

if (this.callbacks['item-added']) {

this.callbacks['item-added']();

}

}

async onItemsAdded(data = []) {

this.items.push(...data);

if (this.callbacks['items-added']) {

this.callbacks['items-added']();

}

}

async onItemRemoved(data) {

const {_id} = data;

const index = this.items.findIndex(c => c._id === _id);

this.items.splice(index, 1);

if (this.callbacks['item-removed']) {

this.callbacks['item-removed']();

}

}

async onItemsRemoved(data = []) {

for (let d of data) {

const {_id} = d;

const index = this.items.findIndex(c => c._id === _id);

this.items.splice(index, 1);

}

if (this.callbacks['items-removed']) {

this.callbacks['items-removed']();

}

}

async onItemUpdated(data) {

const {_id} = data;

const index = this.items.findIndex(c => c._id === _id);

if (index > -1) {

if (Array.isArray(this.items[index])) {

this.items[index].push(data);

} else {

this.items[index] = Object.assign(this.items[index], data);

}

if (this.callbacks['item-updated']) {

this.callbacks['item-updated']();

}

}

}

async onItemsUpdated(data = []) {

for (let d of data) {

const {_id} = d;

const index = this.items.findIndex(c => c._id === _id);

this.items[index] = Object.assign(this.items[index], data);

}

if (this.callbacks['items-updated']) {

this.callbacks['items-updated']();

}

}

}

We start off by importing Container and PusherService. We use the Container to allow us to get an instance of the PusherService. The constructor takes a single config object. It is comprised of the following:

- dependencies – a collection of dependencies that could possibly be necessary

- channel – the channel name

- initItems – the initial array of items

- callbacks – callback handlers for individual events

We wire up all the handlers and events for a given channel by calling the listen() function. If there is an init callback, we also call it.

listen()

This function simply subscribes to a set of events and wires up each with a handler.

stopListening()

This function unsubscribes all events for a given channel and releases the instance of the PusherService.

on ItemAdded(data)

This functions handles when an item is added. It pushes the data on to the collection. If a callback, item-added, is provided, then it is called.

onItemsAdded(data = [])

This function handles when multiple items are added. It does this by pushing all the items onto the collection. If a callback, items-added, is provided, then it is called.

onItemRemoved(data)

This function handles when an item is removed. It grabs the _id from the data object and finds the index in the items collection. If a valid index is found, then it is spliced from the items collection. If a callback, item-removed, is provided, then it is called.

onItemsRemoved(data = [])

This function handles when multiple items are removed. It does this by looping over the data collection. It grabs the _id from each individual item and finds the index in the items collection. If a valid index is found, then it is spliced from the items collection. If a callback, items-removed, is provided, then it is called.

onItemUpdated(data)

This function handles when an item is updated. It grabs the _id from the data object and finds the index in the items collection. If a valid index is found, then the location is updated with the data object. If a callback, item-updated, is provided, then it is called.

onItemsUpdated(data = [])

This function handles when multiple items are updated. It does this by looping over the data collection. It grabs the _id from each individual item and finds the index in the items collection. If a valid index is found, then it is updated with the data object. If a callback, items-updated, is provided, then it is called.

Events and Callbacks

As you have already noticed, we are using a convention of events:

- item-added

- items-added

- item-removed

- items-removed

- item-updated

- items-updated

This allows us to have a generic data API so that we can handle any CRUD operation for a given channel. Think of a channel as a table or collection in your database.

We also have a set of callbacks:

- init

- item-added

- items-added

- item-removed

- items-removed

- item-updated

- items-updated

These callbacks provide a means for doing any custom UI operations like animations or moving to the corresponding change.

UI Sample

This is where Aurelia really shines, we now have a real-time object in the form of a PusherCollection. It exposes an items property and we can simply use it in our HTML markup normally.

View

<table>

...

<tbody>

<tr repeat.for="item in quoteItems.items">

...

</tr>

</tbody>

</table>This simplified markup focuses on the binding of the items collection.

View Model

The following snippet is how you would use the PusherCollection in a given view model:

// Load initial data

// data = [...] or []

// Wire up Pusher

const channelName = 'quotes';

const config = {

dependencies: [],

channel: channelName,

initItems: data,

callbacks: {

'init': async () => {

await wait(750);

this.scrollToBottom();

},

'item-added': async () => {

await wait(250);

this.scrollToBottom();

}

}

}

this.quoteItems = new PusherCollection(config);

In our view model, we either load up initial data or have an empty array. We set the channelName, initItems, and callbacks. In this example, we are wiring up the init callback to scroll to the bottom of the array of data. In the item-added callback we perform the same operation. We can do pretty much anything we want to help notify the user of changes coming in.

UI

Here is a screen shot of a table wired to a PusherCollection in action:

Hope this helps!

JSON.parse and the reviver function

Building applications that store their data in NoSQL databases like MongoDB can sometimes be a challenge when dealing with dates and, even, IDs. In your front-end application, you could have put together the nicest form and have your dates formatted exactly as you like them but when you perform a POST to create a new record, the date ends up getting stored as a string.

Problem Statements

MongoDB really wants your dates in your documents to be date types. If you ever need to do any complex queries or aggregates, you will find that dates stored as a string type just do not work.

The same is true for the _id that MongoDB manages for you. It internally becomes an ObjectId. If you want to perform a PUT to update an existing record, you will need to pass a filter criteria. If that criteria contains the _id property, it needs to be represented as an ObjectId.

Why are these a problem?

The problem arises when we try to serialize our data and send it to our REST endpoints. The most common way to serialize JSON objects is to use JSON.stringify. Consider, for example, we have the following employee object from our front-end:

{

first_name: "Matthew",

last_name: "Duffield",

start_date: Sun Jun 30 2019 17:00:00 GMT-0700 (Pacific Daylight Time)

}

As you can see, the start_date is represented as a native Date object. If we were to serialize this object using JSON.stringify and send it to our REST endpoint, we would see the following:

{

"first_name": "Matthew",

"last_name": "Duffield",

"start_date": "2019-07-01T00:00:00.000Z"

}

Our date is no longer a native object, but a string representation. When we process this on our server, what do you think will be stored? We will get a string instead of a date.

OK, I get it. So, how do we fix this?

On the server, when we receive a request we will want to parse the body of the request. I am sure you have used JSON.parse a thousand times and never even thought about the second parameter. I know that is what I have done. Well, there is a second optional reviver function that you can pass. This function is used to perform a transformation on the resulting object before it is returned. This means that this function will be run for every property you have in a given JSON object. So, be careful what you put into this function.

You will see that the function has two parameters: key and value. This makes sense as we are simply traversing over all the properties on the object.

Let’s take a look at what an implementation might look like:

function reviver(key, value) {

if (typeof value === 'string') {

if (Date.parse(value)) {

return new Date(value);

} else if (key === '_id') {

return ObjectId(value);

}

}

return value;

}

Okay, what are we doing here? This reviver function is checking the type of the value. If it is of type string, then it performs an additional check to see if the value is a valid date. If it is a valid date, then we return a new date object. If the value is not a valid date, then we check to see if the key has the name of _id. If true, then we return an ObjectId of the value. Finally, if neither of these checks pass, then we simply return the value as is.

If you use the reviver function on the sample payload above, everything works just fine. This will now deserialize your date strings back to what they should be, date objects.

{

first_name: "Matthew",

last_name: "Duffield",

start_date: Sun Jun 30 2019 17:00:00 GMT-0700 (Pacific Daylight Time)

}

Warning, Date.parse can be misleading

It turns out that Date.parse will eagerly accept values that aren’t really meant to be Dates. For example, if we were to add a postal_code property to our object and then call the JSON.stringify function, we would see the following:

{

"first_name": "Matthew",

"last_name": "Duffield",

"start_date": "2019-07-01T00:00:00.000Z",

"postal_code": "28210"

}

That looks like a perfect representation of our object and after we send our request over the wire to persist, our server would want to deserialize the string representation using our new reviver function. The following is what you would see:

{

first_name: "Matthew",

last_name: "Duffield",

postal_code: Mon Jan 01 28210 00:00:00 GMT-0800 (Pacific Standard Time),

start_date: Sun Jun 30 2019 17:00:00 GMT-0700 (Pacific Daylight Time)

}

As you can see, our postal_code has been converted over to a date! That just won’t work for us. So, let’s go back to the reviver function and modify it ever so slightly. Here is what we have now:

function reviver(key, value) {

if (typeof value === 'string') {

if (/\d{4}-\d{1,2}-\d{1,2}/.test(value) ||

/\d{4}\/\d{1,2}\/\d{1,2}/.test(value)) {

return new Date(value);

} else if (key === '_id') {

return ObjectId(value);

}

}

return value;

}

This time, we don’t use the Date.parse function. Instead we have decided to force the front-end to always serialize dates in the following to formats: yyyy-mm-dd or yyyy/mm/dd. You may want to enforce a different rule, just make sure that you uniquely identify dates.

Now that we have this change, let’s go ahead and deserialize our string again:

{

first_name: "Matthew",

last_name: "Duffield",

postal_code: "28210",

start_date: Sun Jun 30 2019 17:00:00 GMT-0700 (Pacific Daylight Time)

}

Things are now looking exactly as they should! The same thing will happen if you were to perform a PUT and wanted to update a record based on the _id. If you pass in a filter criteria in the form of JSON, then when the server deserializes it into an object, it would automatically be converted to an ObjectId.

As you can see, the reviver function can really help out and keep your code clean. It removes the need to clutter your server side code with all the ceremony of remembering what properties were supposed to be dates and manually converting your _id properties.

Initial thoughts using Fastify

If you are a full-stack developer than the decision as to which back-end server library to use has crossed your path multiple times. If you are a NodeJS developer the choices are little more narrow but you still have a lot of overwhelming choices. Probably the most popular are Express and Koa but there is a new kid on the block that is truly amazing.

The creators of Fastify had one initial goal in mind, building the fastest server for NodeJS developers. When they looked at the existing open sourced frameworks, they discovered some pain points that could be corrected but that would introduce breaking changes to existing application programming interfaces (API). This led to the development of Fastify.

What is Fastify?

Fastify is a web framework highly focused on providing the best developer experience with the least overhead and powerful plugin architecture. It is also considered one of the fastest frameworks out there. So how does it do this? Well, the simply answer is schema. In JavaScript, the process of serializing and deserializing objects is very expensive and inefficient. The authors of Fastify recognized this and decided to take this problem on head-on. They came up with their own serialization engine that used schema to speed up the process. By using typings provided in a schema, Fastify can optimize the serialization process for any given operation. This includes both incoming requests and outgoing responses.

Fastify is also fully extendible via its hooks, plugins, and decorators.

What are Plugins?

As you write your server code, you always try to follow the don’t repeat yourself (DRY) principle and keep your code clean but it can get messy quick. In a typical Express server, you will find that you are requiring multiple packages as middleware to help configure security, parsing, database configurations, etc. If you were to look at your server.js file after you have completed a vanilla server implementation, you would a healthy spread of custom code and middleware configurations.

Fastify tries to help you keep your code as clean as possible by introducing a Plugin model. In fact, you can even take it a step further by separating your development concerns by placing all of your third-party dependency configuration in a plugins folder and all of your implementation code in a services folder. This really cleans up your code and it also facilitates migrations from one cloud provider to another without a lot of provider specific code to change. By separating everything out, you can now have cloud provider specific plugins that will just work. If you want to try a different cloud provider, simply swap out that plugin for another cloud provider.

Fastify currently has 36 core plugins and 75 community plugins. But the story doesn’t end there, Fastify has a simple API that allows you to author your own plugin if you can’t find exactly what you are looking for.

If you wanted to ensure your endpoints enable CORS, you can use the fastify-cors plugin. If you wanted to support Web Sockets, you simply grab the fastify-websocket plugin. You want Swagger documentation, use the fastify-swagger plugin.

Fastify has a register function that allow you to bring in your plugin. This function takes two parameters, one for the actual plugin, which is typically resolved using require, and the other for configuration to provide to the plugin.

What I really like about this architecture is that you can use an auto loader that will scan your plugins and services folders and automatically bring in all of your dependencies. This removes so much ceremony and manual coding by using a simple convention which is something I am a big fan of.

So why should I care?

I like build robust systems that have very little redundancy. For example, if I needed to build a REST API, I would create a generic contract and have a single dynamic endpoint to handle each of the create, retrieve, update, and delete operations. This reduces the need to have a 1:1 implementation across all of your tables or collections. It requires a little more design up front but the amount of code to maintain as well testing is tiny compared to the traditional verbose and redundant approach. Fastify helps with separation of concerns as well as building complex servers while still keeping things as simple as possible.

What’s next?

Take some time and play with Fastify. You may find that it resonates well with you. This was a very high-level introduction to Fastify and doesn’t even scratch the surface of all the capabilities you can use. I am very happy with the library! In a future post, we will take a look at a reference implementation that I use for my clients.

Aurelia and Firebase Collection

Building a vertical line of business application can get complicated quickly. It is easy to simply provide a forms over data solution that allows user to perform the various database CRUD (create, retrieve, update, delete) capabilities. However, you will find most clients are now demanding more mature and advanced user experiences. One such experience is providing real-time or near real-time updates. With the broad browser support of Web Sockets this is not only feasible but most Cloud providers offer their own flavor of allowing you to integrate this feature in your application.

We will be looking at one such offering, Firebase Realtime Database. Although the example code that we will evaluate is Firebase Realtime Database specific, it would be easy to modify and work with Firestore or even your own REST API using Socket.io or SignalR.

Before we dive into looking at the code, it is important to understand what Web Sockets bring to the table and how it is different from HTTP. When serving static assets from a server, HTTP is the perfect protocol. However, you will find that it is limited with regard to long-lasting connections and bi-directional communication. Web Sockets give us a protocol that allows to establish a connection with a server and then subscribe to events that we are interested in by listening for changes. If we were to build a chat application, we might want to listen to a message event in order to update our user interface.

The chat example would most likely have a collection of message objects, each with a set of properties that further describe the message and other metadata. It is possible to roll your own implementation and write a listener that is subscribing to message events and updates an items collection. Wouldn’t it be nice if you could simply create an instance of a socket aware object that could listen to inserts, updates, or deletes? This is where Firebase Collection comes in.

Code Example

This example code assumes you have working knowledge of Aurelia as well as have played with Google Firebase Realtime Database. Let’s take a look a the implementation:

import environment from '../environment';

export class FirebaseCollection {

firebaseConfig = environment.firebaseQuoteConfig;

query = null;

valueMap = new Map();

items = [];

constructor(path) {

if (firebase) {

const app = firebase.apps.find(f => f.name === path);

if (app) {

this.query = app.database().ref(path);

this.listenToQuery(this.query);

} else {

const fb = firebase.initializeApp(this.firebaseConfig, path);

this.query = fb.database().ref(path);

this.listenToQuery(this.query);

}

}

}

listenToQuery(query) {

query.on('child_added', (snapshot, previousKey) => {

this.onItemAdded(snapshot, previousKey);

});

query.on('child_removed', (snapshot) => {

this.onItemRemoved(snapshot);

});

query.on('child_changed', (snapshot, previousKey) => {

this.onItemChanged(snapshot, previousKey);

});

query.on('child_moved', (snapshot, previousKey) => {

this.onItemMoved(snapshot, previousKey);

});

}

stopListeningToQuery() {

this.query.off();

}

onItemAdded(snapshot, previousKey) {

let value = this.valueFromSnapshot(snapshot);

let index = previousKey !== null ?

this.items.indexOf(this.valueMap.get(previousKey)) + 1 : 0;

this.valueMap.set(value.__firebaseKey__, value);

this.items.splice(index, 0, value);

}

onItemRemoved(oldSnapshot) {

let key = oldSnapshot.key;

let value = this.valueMap.get(key);

if (!value) {

return;

}

let index = this.items.indexOf(value);

this._valueMap.delete(key);

if (index !== -1) {

this.items.splice(index, 1);

}

}

onItemChanged(snapshot, previousKey) {

let value = this.valueFromSnapshot(snapshot);

let oldValue = this._valueMap.get(value.__firebaseKey__);

if (!oldValue) {

return;

}

this._valueMap.delete(oldValue.__firebaseKey__);

this._valueMap.set(value.__firebaseKey__, value);

this.items.splice(this.items.indexOf(oldValue), 1, value);

}

onItemMoved(snapshot, previousKey) {

let key = snapshot.key;

let value = this._valueMap.get(key);

if (!value) {

return;

}

let previousValue = this.valueMap.get(previousKey);

let newIndex = previousValue !== null ? this.items.indexOf(previousValue) + 1 : 0;

this.items.splice(this.items.indexOf(value), 1);

this.items.splice(newIndex, 0, value);

}

valueFromSnapshot(snapshot) {

let value = snapshot.val();

if (!(value instanceof Object)) {

value = {

value: value,

__firebasePrimitive__: true

};

}

value.__firebaseKey__ = snapshot.key;

return value;

}

add(item) {

return new Promise((resolve, reject) => {

let query = this.query.ref().push();

query.set(item, (error) => {

if (error) {

reject(error);

return;

}

resolve(item);

});

});

}

remove(item) {

if (item === null || item.__firebaseKey__ === null) {

return Promise.reject({message: 'Unknown item'});

}

return this.removeByKey(item.__firebaseKey__);

}

getByKey(key) {

return this.valueMap.get(key);

}

removeByKey(key) {

return new Promise((resolve, reject) => {

this.query.ref().child(key).remove((error) =>{

if (error) {

reject(error);

return;

}

resolve(key);

});

});

}

clear() {

return new Promise((resolve, reject) => {

let query = this.query.ref();

query.remove((error) => {

if (error) {

reject(error);

return;

}

resolve();

});

});

}

}

Don’t be overwhelmed if you feel like this appears to be overly complex. Hopefully, after we review it, you will feel more confident with how it works.

Firebase Events and Handlers

We begin by importing environment that provides the configuration information for Firebase. Next, the constructor handles configuring the Firebase instance as well as creating a query to listen for changes and a function call, listenToQuery, which handles a the following events: child_added, child_removed, child_changed, child_movedonItemAdded, onItemRemoved, onItemChanged, onItemMoved.

There is also a function stopListeningToQuery. This simply turns off the subscriptions previously defined.

The onItemAdded function takes two parameters, snapshot and previousKey. It first tries to obtain the value from the snapshot calling the helper function, valueFromSnapshot. Next, it determines the index by seeing if the previousKey is not null and finding the index of the previousKey + 1 or by simply using 0. The valueMap object is next updated with the key and value. Finally, the value is spliced into the items array at the given index position.

The onItemRemoved function takes a single parameter, oldSnapshot, and accesses the key property. It then tries to find the corresponding object stored in the valueMap using the key. If no value is found, we simply return out of the function. If the value is not null, then we obtain the index from the items array. We then delete the key from the valueMap and, finally, splice the object from the items array using the index.

The onItemChanged function takes two parameters, snapshot and previousKey. It first tries to obtain the value from the snapshot calling the helper function, valueFromSnapshot. Next, it tries to find the existing object stored in the valueMap using the__firebaseKey__ from the value. If no oldValue is found, we simply return out of the function. Otherwise, we remove the oldValue from the valueMap and also set the new updated value to the valueMap. Finally, we splice in the new value while removing the old value in the items array.

Data Functions

Each of the following functions will utilize the Firebase API to affect the Realtime Database directly. The user interface will react accordingly when a given event is fired and the corresponding handler handles the event, thus updating the items array. With Aurelia this is a simple repeat.for binding.

The add function takes in a single parameter, item. This simply uses the Firebase API to push the item onto the watched query. This function is promise based and either returns the item upon success or rejects the promise passing the error.

The remove function takes in a single parameter, item. It first checks if the item is not null as well as the property, __firebaseKey is not null. It then simply removes the item by calling a helper function, removeByKey.

The getByKey function takes in a single parameter, key. It simply returns a lookup in the valueMap based on the key.

The removeByKey function takes in a single parameter, key. It returns a promise that attempts to remove the key from the underlying query. It will either resolve the key upon success or reject the promise passing the error.

The clear function returns a promise. It attempts to clear the underlying query. It will either resolve upon success or reject the promise passing the error.

Sample HTML Usage

Let’s now shift gears and look at a simple HTML binding that will respond to our simple chat example. Consider the following example:

<template>

<div class="chat-container">

<div class="chat-header">

<div class="flex-column-1">

<h4>Chatting in ${channel}</h4>

</div>

<div class="flex-column-none">

<span class="chat-header-close" click.delegate="toggleChatSidebar($event)">

<i class="fas fa-times"></i>

</span>

</div>

</div>

<div class="chat-content flex-row-1 margin-bottom-10 overflow-y-auto">

<ul class="chat-messages">

<li repeat.for="m of collection.items"

class="flex-column-1">

<div class="message-data ${username.toLowerCase() == m.username.toLowerCase() ? '' : 'align-right'}">

<span class="message-data-name">

<i class="fa fa-circle online"></i>

${m.name | properCase}

</span>

<span class="message-data-time">${m.created_date | dateFormat:'date-time'}</span>

<span class="message-data-delete"

click.delegate="deleteMessage($event, $index, channel, m)">

<i class="fa fa-times pointer-events-none"></i>

</span>

</div>

<div class="message ${username.toLowerCase() == m.username.toLowerCase() ? 'my-message' : 'other-message align-self-end'}">

${m.msg}

</div>

</li>

</ul>

</div>

<form id="messageInputForm" class="chat-input">

<div class="input-group">

<input id="messageInput"

class="form-control"

placeholder="Type your message..."

value.bind="message"

keydown.delegate="handleKeydown($event)">

<div class="input-group-append">

<button class="btn btn-outline-secondary" type="button"

click.delegate="sendMessage($event)">SEND</button>

</div>

</div>

</form>

</div>

</template>Of all the markup we see, we are really only concerned with the following line:

<li repeat.for="m of collection.items"

class="flex-column-1">As you can see, we can have multiple channels for chatting. Each channel would be an instance of the Firebase Collection and we access the collection by referencing the items property.

Sample View Model

In the view model constructor you can simply create a new instance of the Firebase Collection and pass in a path.

import environment from '../../../environment';

import {FirebaseCollection} from '../../../models/firebase-collection';

export class Chat {

static inject() {

return [Element];

}

collection;

channel = 'lobby';

constructor(element) {

this.element = element;

this.path = `channels/${this.channel}`;

}

attached() {

this.collection = new FirebaseCollection(this.path);

}

detached() {

this.collection.stopListening();

}

}

As you can see from above, the view model is minimalistic. Now that we have a FirebaseCollection, we can simply instantiate as many as we need throughout our and gain all the benefits of a realtime collection.

Here is a screen shot of the chat panel in action:

Building LOB applications in AngularJS

I had a great time speaking last night at the Charlotte Enterprise Developers Guild. Thank you everybody for coming out and listening. As promised, here are slides from my talk.

TechTalent South – Presentation

I had a great time speaking at TechTalent South today. Thank you for your invitation and allowing me to share some of my knowledge and experience.

Here are the slides for review as promised.

Thanks again!

Upcoming Speaking Events

I have several speaking events going on this week and next.

Tomorrow, I will speaking to the TechTalent South. My topic will be, “Building Applications with Ubiquity in Mind”

This coming Friday, 8/22, I will be hosting and presenting the Unity 2D Workshop. This event will be all day.

Next Tuesday, I am speaking to the Charlotte Enterprise Developers Guild. My topic will be, “Building Line of Business Applications using AngularJS”

Hope to see you at one of these events!

.NET Uri, database not found, and encoding

I ran into an interesting issue when trying to debug some code a while back. I had written a dynamic that would allow a person to authenticate and then pick a database in which to perform their operations. For the most part, this system worked well and allowed me to keep things simple. The part that was trick was that I had written my reporting and dashboard engine to use a single Razor .cshtml file to handle all requests. It in turn pulled the report definition or dashboard definition XML files from the database. The problem came when one of the SQL Server databases that the system was deployed was not the default instance but a “named instance“.

In my code, I would simply construct an Uri object and pass in the string that represented the parameters that I needed for the given page. However, since a named instance uses the ‘\‘ character, this caused some problems as my simple string representation was not encoding the character and thus when the server received the request it was not a valid server name. I spent hours thinking that it was a problem with the configuration with SQL Server. It wasn’t until I debugged the .cshtml file that I realized that the name of the server was not coming across correctly and that I was not encoding the name properly. Here is what I finally came up with to correct the issue:

string address = string.Format("../PreviewReturnSetupBillDesigner.cshtml?

Key={0}&ServerName={1}&DatabaseName={2}",

101,

HttpUtility.UrlEncode("monticello\test"),

HttpUtility.UrlEncode("sample"));

Uri uri = new Uri(address, UriKind.RelativeOrAbsolute);

Previously, I was just passing in the server and database names without encoding them. This worked for all default installations of SQL Server but once you had a named instance in place, all bets were off.

Hopefully this will help anyone from spinning their wheels if they are doing anything similar…

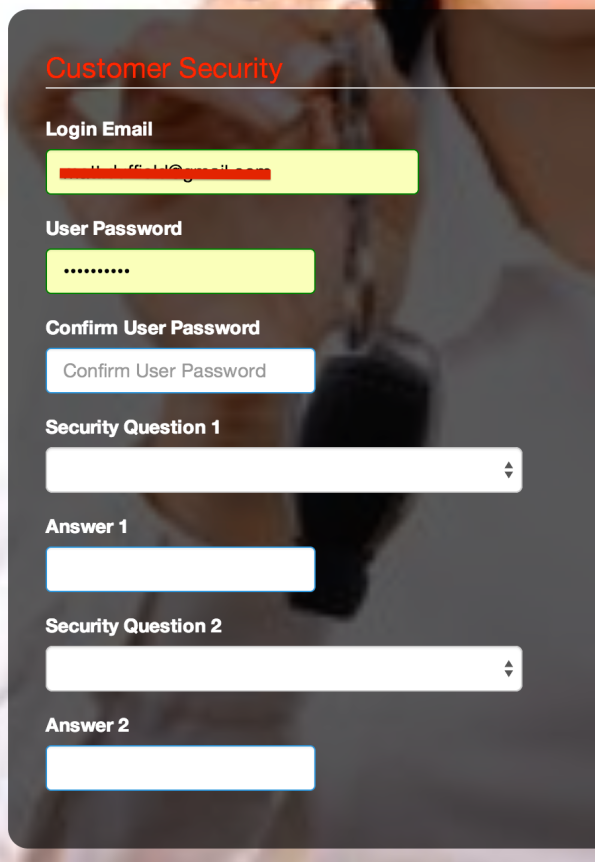

Chrome auto fills email and password

I have recently ran into a weird situation when testing some of my screens with Chrome. Consider the following screen shot:

As you can see, Chrome is auto filling my email address and password. As this is a screen that I am designing, I was very surprised to see this behavior. It took me a while but I found various solutions to the problem. The one that I elected to use was more by choice of the designer I have built to create these screens than any other reason.

Place the following code before “Login Email”, as in my example:

<input type="password" style="display:none;" />

As you can see from the code above, we are basically creating an Input tag with the Type of Password. We also set the Style to Display:None. This ensures that it isn’t visible and fixes our issue with Chrome.

I hope that this is a temporary fix and that Chrome will work properly in the future but until then, we have ourselves a work around.

Here is a screen shot of the form now working properly:

Hope this helps….